kubernetes 高可用集群搭建

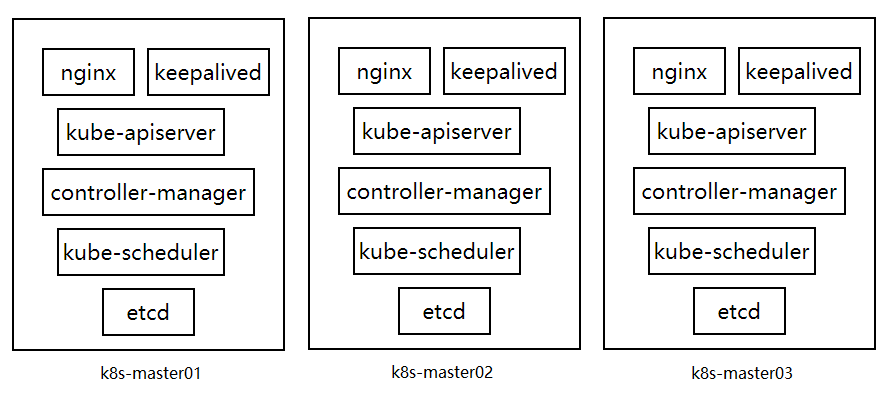

apiserver、controller-manager、scheduler都是无状态的应用,且同时只能有一个执行当前的任务,如当需要创建一个pod时只能有一个接受此任务,所以这三个应用的节点数可以为任意个数。etcd为有状态应用,所以必须为奇数个节点数。下面是一个高可用加负载均衡的架构图。

准备工作:

yum源:

wget -O /etc/yum.repos.d/epel.repo http://mirrors.aliyun.com/repo/epel-7.repo

设置时间服务:

systemctl start chronyd.service systemctl enable chronyd.service timedatectl set-timezone Asia/Shanghai chronyc -a makestep timedatectl status # 查看时区

还有DNS,hostname、Selinux、firewall。

安装etcd

查看版本:建议3.x

yum info etcd

安装:

yum install etcd -y

创建目录:

mkdir /etc/etcd/cert

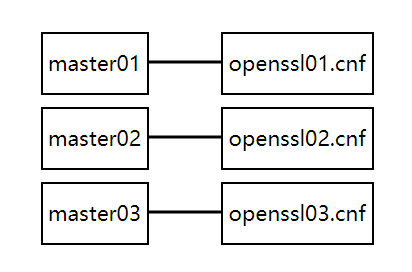

openssl.cnf:创建csr请求时要将IP和域名嵌入。搭建高可用集群时,每套证书都有自己对应的配置文件,配置文件的内容也是随之变化的,而不是只是使用一套配置。

创建etcd证书使用的配置文件:

cat > openssl.cnf <<EOF [req] req_extensions = v3_req distinguished_name = req_distinguished_name [ req_distinguished_name ] [ v3_req ] basicConstraints = CA:FALSE keyUsage = nonRepudiation, digitalSignature, keyEncipherment subjectAltName = @alt_names [alt_names] DNS.1 = kubernetes DNS.2 = kubernetes.default DNS.3 = kubernetes.default.svc DNS.4 = kubernetes.default.svc.cluster.local DNS.5 = etcd01 DNS.6 = localhost IP.1 = 10.96.0.1 EOF cat > openssl.cnf <<EOF [req] req_extensions = v3_req distinguished_name = req_distinguished_name [ req_distinguished_name ] [ v3_req ] basicConstraints = CA:FALSE keyUsage = nonRepudiation, digitalSignature, keyEncipherment subjectAltName = @alt_names [alt_names] DNS.1 = kubernetes DNS.2 = kubernetes.default DNS.3 = kubernetes.default.svc DNS.4 = kubernetes.default.svc.cluster.local DNS.5 = etcd02 DNS.6 = localhost IP.1 = 10.96.0.1 EOF cat > openssl.cnf <<EOF [req] req_extensions = v3_req distinguished_name = req_distinguished_name [ req_distinguished_name ] [ v3_req ] basicConstraints = CA:FALSE keyUsage = nonRepudiation, digitalSignature, keyEncipherment subjectAltName = @alt_names [alt_names] DNS.1 = kubernetes DNS.2 = kubernetes.default DNS.3 = kubernetes.default.svc DNS.4 = kubernetes.default.svc.cluster.local DNS.5 = etcd03 DNS.6 = localhost IP.1 = 10.96.0.1 EOF

创建etcd证书:

# 创建peer CA openssl genrsa -out ca-peer.key 2048 openssl req -x509 -new -nodes -key ca-peer.key -subj "/CN=etcd" -days 5000 -out ca-peer.crt # 创建证书 openssl genrsa -out etcd_peer1.key 2048 openssl req -new -key etcd_peer1.key -subj "/CN=etcd" -out etcd_peer1.csr -config openssl.cnf openssl x509 -req -in etcd_peer1.csr -CA ca-peer.crt -CAkey ca-peer.key -CAcreateserial -out etcd_peer1.crt -days 5000 -extensions v3_req -extfile openssl.cnf openssl genrsa -out etcd_peer2.key 2048 openssl req -new -key etcd_peer2.key -subj "/CN=etcd" -out etcd_peer2.csr -config openssl.cnf openssl x509 -req -in etcd_peer2.csr -CA ca-peer.crt -CAkey ca-peer.key -CAcreateserial -out etcd_peer2.crt -days 5000 -extensions v3_req -extfile openssl.cnf openssl genrsa -out etcd_peer3.key 2048 openssl req -new -key etcd_peer3.key -subj "/CN=etcd" -out etcd_peer3.csr -config openssl.cnf openssl x509 -req -in etcd_peer3.csr -CA ca-peer.crt -CAkey ca-peer.key -CAcreateserial -out etcd_peer3.crt -days 5000 -extensions v3_req -extfile openssl.cnf # 创建服务端验证客户端CA openssl genrsa -out ca-client.key 2048 openssl req -x509 -new -nodes -key ca-client.key -subj "/CN=etcd" -days 5000 -out ca-client.crt # 创建各个节点的服务端验证客户端的证书 openssl genrsa -out etcd_client1.key 2048 openssl req -new -key etcd_client1.key -subj "/CN=etcd" -out etcd_client1.csr -config openssl.cnf openssl x509 -req -in etcd_client1.csr -CA ca-client.crt -CAkey ca-client.key -CAcreateserial -out etcd_client1.crt -days 5000 -extensions v3_req -extfile openssl.cnf openssl genrsa -out etcd_client2.key 2048 openssl req -new -key etcd_client2.key -subj "/CN=etcd" -out etcd_client2.csr -config openssl.cnf openssl x509 -req -in etcd_client2.csr -CA ca-client.crt -CAkey ca-client.key -CAcreateserial -out etcd_client2.crt -days 5000 -extensions v3_req -extfile openssl.cnf openssl genrsa -out etcd_client3.key 2048 openssl req -new -key etcd_client3.key -subj "/CN=etcd" -out etcd_client3.csr -config openssl.cnf openssl x509 -req -in etcd_client3.csr -CA ca-client.crt -CAkey ca-client.key -CAcreateserial -out etcd_client3.crt -days 5000 -extensions v3_req -extfile openssl.cnf # 客户端访问集群使用的证书 openssl genrsa -out etcd_client.key 2048 openssl req -new -key etcd_client.key -subj "/CN=etcd" -out etcd_client.csr -config openssl.cnf openssl x509 -req -in etcd_client.csr -CA ca-client.crt -CAkey ca-client.key -CAcreateserial -out etcd_client.crt -days 5000 -extensions v3_req -extfile openssl.cnf

配置变量:

vim /etc/etcd/etcd.conf ETCD_NAME=etcd01 ETCD_DATA_DIR=/var/lib/etcd/default.etcd ETCD_INITIAL_ADVERTISE_PEER_URLS=https://etcd01:2380 ETCD_LISTEN_PEER_URLS=https://192.168.1.163:2380 ETCD_LISTEN_CLIENT_URLS=https://192.168.1.163:2379,https://127.0.0.1:2379 ETCD_ADVERTISE_CLIENT_URLS=https://etcd01:2379,https://localhost:2379 # 集群之间的配置 ETCD_INITIAL_CLUSTER_TOKEN=etcd-cluster-token ETCD_INITIAL_CLUSTER=etcd01=https://etcd01:2380,etcd02=https://etcd02:2380,etcd03=https://etcd03:2380 ETCD_INITIAL_CLUSTER_STATE=new # 服务端认证客户端的服务端配置 ETCD_CLIENT_CERT_AUTH="true" ETCD_TRUSTED_CA_FILE=/etc/etcd/cert/ca-client.crt ETCD_CERT_FILE=/etc/etcd/cert/etcd_client1.crt ETCD_KEY_FILE=/etc/etcd/cert/etcd_client1.key # 集群之间对等通信使用的证书 ETCD_PEER_CLIENT_CERT_AUTH="true" ETCD_PEER_TRUSTED_CA_FILE=/etc/etcd/cert/ca-peer.crt ETCD_PEER_CERT_FILE=/etc/etcd/cert/etcd_peer1.crt ETCD_PEER_KEY_FILE=/etc/etcd/cert/etcd_peer1.key

开启etcd:

systemctl start etcd.service systemctl enable etcd.service

查看节点状态:因为版本不同使用的命令不同,可能需要设置变量export ETCDCTL_API=3或=2

etcdctl --ca-file ca-client.crt --cert-file etcd_client.crt --key-file etcd_client.key --endpoints='https://etcd01:2379' member list

kubernetes的配置

创建master节点使用的证书配置文件:每个节点都有对应的配置文件。

cat > openssl.cnf <<EOF [req] req_extensions = v3_req distinguished_name = req_distinguished_name [ req_distinguished_name ] [ v3_req ] basicConstraints = CA:FALSE keyUsage = nonRepudiation, digitalSignature, keyEncipherment subjectAltName = @alt_names [alt_names] DNS.1 = kubernetes DNS.2 = kubernetes.default DNS.3 = kubernetes.default.svc DNS.4 = kubernetes.default.svc.cluster.local DNS.5 = k8s-master01 DNS.6 = localhost IP.1 = 10.96.0.1 EOF cat > openssl.cnf <<EOF [req] req_extensions = v3_req distinguished_name = req_distinguished_name [ req_distinguished_name ] [ v3_req ] basicConstraints = CA:FALSE keyUsage = nonRepudiation, digitalSignature, keyEncipherment subjectAltName = @alt_names [alt_names] DNS.1 = kubernetes DNS.2 = kubernetes.default DNS.3 = kubernetes.default.svc DNS.4 = kubernetes.default.svc.cluster.local DNS.5 = k8s-master02 DNS.6 = localhost IP.1 = 10.96.0.1 EOF cat > openssl.cnf <<EOF [req] req_extensions = v3_req distinguished_name = req_distinguished_name [ req_distinguished_name ] [ v3_req ] basicConstraints = CA:FALSE keyUsage = nonRepudiation, digitalSignature, keyEncipherment subjectAltName = @alt_names [alt_names] DNS.1 = kubernetes DNS.2 = kubernetes.default DNS.3 = kubernetes.default.svc DNS.4 = kubernetes.default.svc.cluster.local DNS.5 = k8s-master03 DNS.6 = localhost IP.1 = 10.96.0.1 EOF

创建证书:

集群CA证书:一个集群只有一个CA证书,多个节点信任同一个CA。

# 创建 k8s 集群的ca openssl genrsa -out ca.key 2048 openssl req -x509 -new -nodes -key ca.key -subj "/CN=k8s-ca" -days 5000 -out ca.crt

创建组件证书:

用上面创建的CA来为每一个节点创建各组件的证书。下面的创建证书的过程只是一个节点,多个节点就要创建多遍。

注意 apiserver-kubelet-client 证书的CN字段中的/O=system:masters 这段是必须的,这对证书要被kubectl命令使用,绑定到system:masters组来获取权限,否者kubectl无权限获取资源。

# 创建 apiserver 使用的证书 openssl genrsa -out apiserver.key 2048 openssl req -new -key apiserver.key -subj "/CN=kube-apiserver" -out apiserver.csr -config openssl.cnf openssl x509 -req -in apiserver.csr -CA ca.crt -CAkey ca.key -CAcreateserial -out apiserver.crt -days 5000 -extensions v3_req -extfile openssl.cnf # 创建 apiserver-kubelet-client 证书 openssl genrsa -out apiserver-kubelet-client.key 2048 openssl req -new -key apiserver-kubelet-client.key -subj "/CN=kube-apiserver-kubelet-client/O=system:masters" -out apiserver-kubelet-client.csr -config openssl.cnf openssl x509 -req -in apiserver-kubelet-client.csr -CA ca.crt -CAkey ca.key -CAcreateserial -out apiserver-kubelet-client.crt -days 5000 -extensions v3_req -extfile openssl.cnf # 创建 kube-controller-manager 使用的证书 openssl genrsa -out kube-controller-manager.key 2048 openssl req -new -key kube-controller-manager.key -subj "/CN=system:kube-controller-manager" -out kube-controller-manager.csr -config openssl.cnf openssl x509 -req -in kube-controller-manager.csr -CA ca.crt -CAkey ca.key -CAcreateserial -out kube-controller-manager.crt -days 5000 -extensions v3_req -extfile openssl.cnf # 创建 kube-scheduler 使用的证书 openssl genrsa -out kube-scheduler.key 2048 openssl req -new -key kube-scheduler.key -subj "/CN=system:kube-scheduler" -out kube-scheduler.csr -config openssl.cnf openssl x509 -req -in kube-scheduler.csr -CA ca.crt -CAkey ca.key -CAcreateserial -out kube-scheduler.crt -days 5000 -extensions v3_req -extfile openssl.cnf # 创建 kube-proxy 使用的证书 openssl genrsa -out kube-proxy.key 2048 openssl req -new -key kube-proxy.key -subj "/CN=system:kube-proxy" -out kube-proxy.csr -config openssl.cnf openssl x509 -req -in kube-proxy.csr -CA ca.crt -CAkey ca.key -CAcreateserial -out kube-proxy.crt -days 5000 -extensions v3_req -extfile openssl.cnf

几个证书的CN字段如下:

"/CN=kube-apiserver" "/CN=kube-apiserver-kubelet-client/O=system:masters" "/CN=system:kube-controller-manager" "/CN=system:kube-scheduler" "/CN=system:kube-proxy"

front-proxy-client 证书:

此处ca不同于上面的ca

CN字段为 "/CN=front-proxy-client"

# 创建 front-proxy-client 的ca openssl genrsa -out front-proxy-ca.key 2048 openssl req -x509 -new -nodes -key front-proxy-ca.key -subj "/CN=front-proxy-ca" -days 5000 -out front-proxy-ca.crt # 创建 front-proxy-client 证书 openssl genrsa -out front-proxy-client.key 2048 openssl req -new -key front-proxy-client.key -subj "/CN=front-proxy-client" -out front-proxy-client.csr -config openssl.cnf openssl x509 -req -in front-proxy-client.csr -CA front-proxy-ca.crt -CAkey front-proxy-ca.key -CAcreateserial -out front-proxy-client.crt -days 5000 -extensions v3_req -extfile openssl.cnf

api-server

公共配置config:

# logging to stderr means we get it in the systemd journal KUBE_LOGTOSTDERR="--logtostderr=true" # 日志位置 # journal message level, 0 is debug KUBE_LOG_LEVEL="--v=0" # 日志级别 # Should this cluster be allowed to run privileged docker containers KUBE_ALLOW_PRIV="--allow-privileged=true" # 允许特权容器 version < 1.15

api-server的配置:

# The address on the local server to listen to. KUBE_API_ADDRESS="--advertise-address=0.0.0.0" # api-server的监听地址 # The port on the local server to listen on. KUBE_API_PORT="--secure-port=6443 --insecure-port=0" # 监听端口,insecure表示非安全端口,0不启用 # Comma separated list of nodes in the etcd cluster # etcd集群地址 KUBE_ETCD_SERVERS="--etcd-servers=https://etcd01:2379,https://etcd02:2379,https://etcd03:2379" # Address range to use for services KUBE_SERVICE_ADDRESSES="--service-cluster-ip-range=10.96.0.0/12" # 集群内服务使用的网段 # default admission control policies 更多介绍:scriptjc.com/article/1161 KUBE_ADMISSION_CONTROL="--enable-admission-plugins=NodeRestriction" # 启用的准入控制器,主流控制器默认启动 # Add your own! KUBE_API_ARGS="--authorization-mode=Node,RBAC \ # 启用的认证模式 --enable-bootstrap-token-auth=true \ # 基于引导令牌进行认证 --etcd-cafile=/etc/etcd/pki/ca.crt \ --etcd-certfile=/etc/kubernetes/pki/apiserver-etcd-client.crt \ --etcd-keyfile=/etc/kubernetes/pki/apiserver-etcd-client.key \ # 下面是与kubelet通信的证书和与kube-proxy通信的证书 --client-ca-file=/etc/kubernetes/pki/ca.crt \ # 认证客户端的ca --kubelet-client-certificate=/etc/kubernetes/pki/apiserver-kubelet-client.crt \ --kubelet-client-key=/etc/kubernetes/pki/apiserver-kubelet-client.key \ --kubelet-preferred-address-types=InternalIP,ExternalIP,Hostname \ --proxy-client-cert-file=/etc/kubernetes/pki/front-proxy-client.crt \ --proxy-client-key-file=/etc/kubernetes/pki/front-proxy-client.key \ # 下面都是跟kube-proxy通信时要附带的信息 --requestheader-allowed-names=front-proxy-client \ --requestheader-client-ca-file=/etc/kubernetes/pki/front-proxy-ca.crt \ --requestheader-extra-headers-prefix=X-Remote-Extra- \ --requestheader-group-headers=X-Remote-Group \ --requestheader-username-headers=X-Remote-User\ # 用于Pod和api通信时给Pod的serviceaccount签名的,这里用公钥加密,服务端用私钥解密 --service-account-key-file=/etc/kubernetes/pki/sa.pub \ --tls-cert-file=/etc/kubernetes/pki/apiserver.crt \ # k8s的证书和私钥 --tls-private-key-file=/etc/kubernetes/pki/apiserver.key \ # k8s的证书和私钥 --token-auth-file=/etc/kubernetes/token.csv" # 加载node认证用的

kube-apiserver.service文件:

[Unit] Description=Kubernetes API Server Documentation=https://github.com/GoogleCloudPlatform/kubernetes After=network.target After=etcd.service [Service] EnvironmentFile=-/etc/kubernetes/config EnvironmentFile=-/etc/kubernetes/api-server User=kube ExecStart=/usr/local/kubernetes/kube-apiserver \ $KUBE_LOGTOSTDERR \ $KUBE_LOG_LEVEL \ $KUBE_ETCD_SERVERS \ $KUBE_API_ADDRESS \ $KUBE_API_PORT \ $KUBELET_PORT \ $KUBE_ALLOW_PRIV \ $KUBE_SERVICE_ADDRESSES \ $KUBE_ADMISSION_CONTROL \ $KUBE_API_ARGS Restart=on-failure Type=notify LimitNOFILE=65536 [Install] WantedBy=multi-user.target

具体参数详见:

https://kubernetes.io/zh/docs/reference/command-line-tools-reference/kube-apiserver/

创建用户:

useradd -r kube mkdir /var/run/kubernetes chown kube.kube /var/run/kubernetes

创建证书目录:

mkdir /etc/kubernetes/cert/ -pv

拷贝二进制文件:

/usr/local/kubernetes/

生成Pod所需的公钥私钥:

openssl genrsa -out sa.key 2048 openssl rsa -in sa.key -pubout -out sa.pub

kube-apiserver的启动参数总加入如下参数:

--service-account-private-key-file=/etc/kubernetes/cert/sa.pub

kube-controller-manager的启动参数中加入如下参数:

--service-account-private-key-file=/etc/kubernetes/cert/sa.key

生成TLS Bootstrapping使用的token文件:

BOOTSTRAP_TOKEN="$(head -c 6 /dev/urandom | md5sum | head -c 6).$(head -c 16 /dev/urandom | md5sum | head -c 16)" echo "$BOOTSTRAP_TOKEN,system:bootstrapper,10001,\"system:bootstrappers\"" > /etc/kubernetes/token.csv

生成的内容如下:

54c451.b68dc21e45c57e2a,system:bootstrapper,10001,"system:bootstrappers"

启动服务:

systemctl start kube-apiserver.service systemctl enable kube-apiserver.service

查看日志:

tail -f /var/log/message

获取资源:

kubectl --server=https://k8s-master:6443 --certificate-authority=ca.crt --client-certificate=apiserver-kubelet-client.crt --client-key=apiserver-kubelet-client.key get all

生成 kubectl 使用的配置文件:

默认在$HOME/.kube目录里面,这样就不用带着证书了。

参数 --server="https://k8s-master:6443" 要用负载均衡的域名,以备后面做高可用。

kubectl config set-cluster kubernetes --server="https://k8s-master:6443" --certificate-authority=/etc/kubernetes/cert/ca.crt --embed-certs=true kubectl config set-credentials k8s --client-certificate=/etc/kubernetes/cert/apiserver-kubelet-client.crt --client-key=/etc/kubernetes/cert/apiserver-kubelet-client.key --embed-certs=true kubectl config set-context k8s@kubernetes --cluster=kubernetes --user=k8s kubectl config use-context k8s@kubernetes

查看配置:

~]# kubectl config view

apiVersion: v1

clusters:

- cluster:

certificate-authority-data: DATA+OMITTED

server: https://k8s-master:6443

name: kubernetes

contexts:

- context:

cluster: kubernetes

user: k8s

name: k8s@kubernetes

current-context: k8s@kubernetes

kind: Config

preferences: {}

users:

- name: k8s

user:

client-certificate-data: REDACTED

client-key-data: REDACTEDTLS Bootstrapping的绑定:kubelet可以创建csr请求,两种方式选其一即可。

绑定到用户:

kubectl create clusterrolebinding system:bootstrapper --user=system:bootstrapper --clusterrole=system:node-bootstrapper

或绑定到组:

kubectl create clusterrolebinding system:bootstrapper --group=system:bootstrappers --clusterrole=system:node-bootstrapper

自动Approve CSR请求:

kubectl create clusterrolebinding auto-approve-csrs-for-group --group=system:bootstrappers --clusterrole=system:certificates.k8s.io:certificatesigningrequests:nodeclient

自动批准更新CSR请求:

kubectl create clusterrolebinding auto-approve-renewals-for-nodes --group=system:nodes --clusterrole=system:certificates.k8s.io:certificatesigningrequests:selfnodeclient

controller-manager

kube-controller-manager的配置:

KUBE_CONTROLLER_MANAGER_ARGS="--bind-address=127.0.0.1 \ # 监听的地址 --allocate-node-cidrs=true \ # 自动给节点分配子网网段 # 连入apiserver使用的配置 --authentication-kubeconfig=/etc/kubernetes/auth/controller-manager.conf \ --authorization-kubeconfig=/etc/kubernetes/auth/controller-manager.conf \ --client-ca-file=/etc/kubernetes/pki/ca.crt \ # Pod网络 --cluster-cidr=10.244.0.0/16 \ # 用来给node节点签署证书的ca和key --cluster-signing-cert-file=/etc/kubernetes/pki/ca.crt \ --cluster-signing-key-file=/etc/kubernetes/pki/ca.key \ # 启动那些控制器 --controllers=*,bootstrapsigner,tokencleaner \ --kubeconfig=/etc/kubernetes/auth/controller-manager.conf \ --leader-elect=true \ # 开启leader选举功能 # 子网掩码,将Pod网络以24位掩码切成N个网络,切的就是--cluster-cidr定义的网络 --node-cidr-mask-size=24 \ # 当controller-manager作为服务端时去验证它的客户端的证书时所使用的ca --requestheader-client-ca-file=/etc/kubernetes/pki/front-proxy-ca.crt \ --root-ca-file=/etc/kubernetes/pki/ca.crt \ # 用于Pod和api通信时给Pod的serviceaccount签名的,这里作为服务端解密Pod发过来的信息 --service-account-private-key-file=/etc/kubernetes/pki/sa.key \ # 开启这个标志后k8s会为每个控制器创建一个serviceaccount --use-service-account-credentials=true"

生成 controller-manager.conf 的配置:

system:kube-controller-manager 这个用户名是系统内置的,必须要用这个名称,除非自己手动授权

kubectl config --kubeconfig=controller-manager.conf set-cluster kubernetes --server="https://k8s-master:6443" --certificate-authority=/etc/kubernetes/cert/ca.crt --embed-certs=true kubectl config --kubeconfig=controller-manager.conf set-credentials system:kube-controller-manager --client-certificate=/etc/kubernetes/cert/kube-controller-manager.crt --client-key=/etc/kubernetes/cert/kube-controller-manager.key --embed-certs=true kubectl config --kubeconfig=controller-manager.conf set-context system:kube-controller-manager@kubernetes --cluster=kubernetes --user=system:kube-controller-manager kubectl config --kubeconfig=controller-manager.conf use-context system:kube-controller-manager@kubernetes

设置权限:

chmod 644 controller-manager.conf

kube-controller-manager.service文件:

[Unit] Description=Kubernetes Controller Manager Documentation=https://github.com/GoogleCloudPlatform/kubernetes [Service] EnvironmentFile=-/etc/kubernetes/config EnvironmentFile=-/etc/kubernetes/controller-manager User=kube ExecStart=/usr/local/kubernetes/kube-controller-manager \ $KUBE_LOGTOSTDERR \ $KUBE_LOG_LEVEL \ $KUBE_MASTER \ $KUBE_CONTROLLER_MANAGER_ARGS Restart=on-failure LimitNOFILE=65536 [Install] WantedBy=multi-user.target

启动服务:

systemctl enable kube-controller-manager.service

scheduler

scheduler配置:

KUBE_SCHEDULER_ARGS="--address=127.0.0.1 \ # 监听地址 # 配置文件 --kubeconfig=/etc/kubernetes/auth/scheduler.conf \ # 开启选举 --leader-elect=true"

kube-scheduler.service文件:

[Unit] Description=Kubernetes Scheduler Plugin Documentation=https://github.com/GoogleCloudPlatform/kubernetes [Service] EnvironmentFile=-/etc/kubernetes/config EnvironmentFile=-/etc/kubernetes/scheduler User=kube ExecStart=/usr/local/kubernetes/kube-scheduler \ $KUBE_LOGTOSTDERR \ $KUBE_LOG_LEVEL \ $KUBE_MASTER \ $KUBE_SCHEDULER_ARGS Restart=on-failure LimitNOFILE=65536 [Install] WantedBy=multi-user.target

生成 scheduler.conf 配置:

用户名必须为 system:kube-scheduler 是内置的用户名,否者手动授权

kubectl config --kubeconfig=scheduler.conf set-cluster kubernetes --server="https://k8s-master:6443" --certificate-authority=/etc/kubernetes/cert/ca.crt --embed-certs=true kubectl config --kubeconfig=scheduler.conf set-credentials system:kube-scheduler --client-certificate=/etc/kubernetes/cert/kube-scheduler.crt --client-key=/etc/kubernetes/cert/kube-scheduler.key --embed-certs=true kubectl config --kubeconfig=scheduler.conf set-context system:kube-scheduler@kubernetes --cluster=kubernetes --user=system:kube-scheduler kubectl config --kubeconfig=scheduler.conf use-context system:kube-scheduler@kubernetes

设置权限:

chmod 644 scheduler.conf

启动服务:

systemctl enable kube-scheduler.service

node节点

同步时间:还有DNS,hostname、Selinux、firewall。

systemctl start chronyd.service systemctl enable chronyd.service timedatectl set-timezone Asia/Shanghai chronyc -a makestep timedatectl status

yum源:

wget -O /etc/yum.repos.d/docker-ce.repo https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

安装docker:

yum install docker-ce -y

修改 docker.service 为如下配置:加入iptables和代理

vim /usr/lib/systemd/system/docker.service [Service] Environment=HTTPS_PROXY=http://192.168.1.12:8118 Environment=NO_PROXY=127.0.0.0/8,192.168.1.0/24 ExecStart=/usr/bin/dockerd -H fd:// --containerd=/run/containerd/containerd.sock ExecStartPost=/usr/sbin/iptables -P FORWARD ACCEPT ExecReload=/bin/kill -s HUP $MAINPID

重新加载配置和启动docker:

systemctl daemon-reload systemctl start docker

查看配置和运行是否正常:

~]# docker info

打开配置文件:打开内置桥接功能,都为1。

vim /etc/sysctl.conf net.bridge.bridge-nf-call-iptables = 1 net.bridge.bridge-nf-call-ip6tables = 1

重新加载一下:

~]# sysctl -p net.bridge.bridge-nf-call-iptables = 1 net.bridge.bridge-nf-call-ip6tables = 1

测试拉取镜像:

docker pull k8s.gcr.io/pause

kubelet配置

创建目录:

mkdir /var/lib/kubelet mkdir -p /etc/kubernetes/cert mkdir /etc/kubernetes/manifests

kubectl的 kubelet.service 文件:

[Unit] Description=Kubernetes Kubelet Server Documentation=https://github.com/GoogleCloudPlatform/kubernetes After=docker.service Requires=docker.service [Service] WorkingDirectory=/var/lib/kubelet EnvironmentFile=-/etc/kubernetes/config EnvironmentFile=-/etc/kubernetes/kubelet ExecStart=/usr/local/kubernetes/kubelet \ $KUBE_LOGTOSTDERR \ $KUBE_LOG_LEVEL \ $KUBELET_API_SERVER \ $KUBELET_ADDRESS \ $KUBELET_PORT \ $KUBELET_HOSTNAME \ $KUBE_ALLOW_PRIV \ $KUBELET_ARGS Restart=on-failure KillMode=process RestartSec=10 [Install] WantedBy=multi-user.target

公共配置config:

# logging to stderr means we get it in the systemd journal KUBE_LOGTOSTDERR="--logtostderr=true" # journal message level, 0 is debug KUBE_LOG_LEVEL="--v=0" # Should this cluster be allowed to run privileged docker containers # KUBE_ALLOW_PRIV="--allow-privileged=true" # after 1.5 is deprecated

kubelet配置:

# The address for the info server to serve on (set to 0.0.0.0 or "" for all interfaces) KUBELET_ADDRESS="--address=0.0.0.0" # The port for the info server to serve on # KUBELET_PORT="--port=10250" # Add your own! KUBELET_ARGS="--network-plugin=cni \ --config=/var/lib/kubelet/config.yaml \ --kubeconfig=/etc/kubernetes/auth/kubelet.conf \ --bootstrap-kubeconfig=/etc/kubernetes/auth/bootstrap.conf"

kubelet 的 config.yaml 配置:kubelet 和 kube-proxy都支持配置文件进行配置。

address: 0.0.0.0 # 监听地址 apiVersion: kubelet.config.k8s.io/v1beta1 authentication: anonymous: enabled: false webhook: cacheTTL: 2m0s enabled: true x509: clientCAFile: /etc/kubernetes/cert/ca.crt # 信任的ca authorization: # 认可的认证方式 mode: Webhook webhook: cacheAuthorizedTTL: 5m0s cacheUnauthorizedTTL: 30s cgroupDriver: cgroupfs # 与docker info中的Cgroup Driver: cgroupfs 要保持一致 cgroupsPerQOS: true clusterDNS: # 内部DNS的服务地址 - 10.96.0.10 clusterDomain: cluster.local # 当前集群的域名后缀 configMapAndSecretChangeDetectionStrategy: Watch containerLogMaxFiles: 5 containerLogMaxSize: 10Mi contentType: application/vnd.kubernetes.protobuf cpuCFSQuota: true cpuCFSQuotaPeriod: 100ms cpuManagerPolicy: none cpuManagerReconcilePeriod: 10s enableControllerAttachDetach: true enableDebuggingHandlers: true enforceNodeAllocatable: - pods eventBurst: 10 eventRecordQPS: 5 evictionHard: imagefs.available: 15% memory.available: 100Mi nodefs.available: 10% nodefs.inodesFree: 5% evictionPressureTransitionPeriod: 5m0s failSwapOn: false # 启用swap不报错 fileCheckFrequency: 20s hairpinMode: promiscuous-bridge healthzBindAddress: 127.0.0.1 healthzPort: 10248 httpCheckFrequency: 20s imageGCHighThresholdPercent: 85 imageGCLowThresholdPercent: 80 imageMinimumGCAge: 2m0s iptablesDropBit: 15 iptablesMasqueradeBit: 14 kind: KubeletConfiguration kubeAPIBurst: 10 kubeAPIQPS: 5 makeIPTablesUtilChains: true maxOpenFiles: 1000000 maxPods: 110 nodeLeaseDurationSeconds: 40 nodeStatusUpdateFrequency: 10s oomScoreAdj: -999 podPidsLimit: -1 port: 10250 registryBurst: 10 registryPullQPS: 5 resolvConf: /etc/resolv.conf rotateCertificates: true runtimeRequestTimeout: 2m0s serializeImagePulls: true staticPodPath: /etc/kubernetes/manifests # 静态Pod的位置 streamingConnectionIdleTimeout: 4h0m0s syncFrequency: 1m0s volumeStatsAggPeriod: 1m0s

在master上创建bootstrap.conf:

用到的ca就是集群的 ca.crt 文件,用到的token就是 token.csv,用户名是 system:bootstrapper 。

kubectl config --kubeconfig=bootstrap.conf set-cluster kubernetes --server="https://k8s-master:6443" --certificate-authority=/etc/kubernetes/cert/ca.crt kubectl config --kubeconfig=bootstrap.conf set-credentials system:bootstrapper --token=54c451.b68dc21e45c57e2a kubectl config --kubeconfig=bootstrap.conf set-context system:bootstrapper@kubernetes --user=system:bootstrapper --cluster=kubernetes kubectl config --kubeconfig=bootstrap.conf use-context system:bootstrapper@kubernetes

修改 bootstrap.conf 文件权限:

chmod 644 bootstrap.conf

复制 bootstrap.conf 到node节点:

scp bootstrap.conf root@k8s-node01:/etc/kubernetes/

复制ca证书到node节点:

scp /etc/kubernetes/cert/ca.crt root@k8s-node01:/etc/kubernetes/cert/

kubelet.conf:

这个配置文件是自动生成的,前提是要配置bootstrap.conf 来产生向master节点的csr证书签署请求,请求批准后master节点就会发放证书给kubelet。

master上查看CSR请求:

kubectl get csr

同意CSR请求:如果配置了自动Approve则不需要执行下面的命令。

kubectl certificate approve csr-name

下载 cni 插件:

https://github.com/containernetworking/plugins/releases wget https://github.com/containernetworking/plugins/releases/download/v0.8.5/cni-plugins-linux-amd64-v0.8.5.tgz

安装 cni 插件:

mkdir -p /opt/cni/bin tar -xf cni-plugins-linux-amd64-v0.8.5.tgz -C /opt/cni/bin/

启动服务:

systemctl start kubelet.service systemctl enable kubelet.service

kube-proxy

kube-proxy.service 文件:其中 /etc/kubernetes/config 和 kubelet使用的是同一个文件。

[Unit] Description=Kubernetes Kube-Proxy Server Documentation=https://github.com/GoogleCloudPlatform/kubernetes After=network.target [Service] EnvironmentFile=-/etc/kubernetes/config EnvironmentFile=-/etc/kubernetes/proxy ExecStart=/usr/local/kubernetes/kube-proxy \ $KUBE_LOGTOSTDERR \ $KUBE_LOG_LEVEL \ $KUBE_MASTER \ $KUBE_PROXY_ARGS Restart=on-failure LimitNOFILE=65536 [Install] WantedBy=multi-user.target

配置文件 /etc/kubernetes/proxy 内容如下:

### # kubernetes proxy config # Add your own! KUBE_PROXY_ARGS="--config=/var/lib/kube-proxy/config.yaml"

kube-proxy 的 config.yaml:

apiVersion: kubeproxy.config.k8s.io/v1alpha1 bindAddress: 0.0.0.0 # 监听的地址 clientConnection: acceptContentTypes: "" burst: 10 contentType: application/vnd.kubernetes.protobuf kubeconfig: /etc/kubernetes/kube-proxy.conf qps: 5 clusterCIDR: 10.244.0.0/16 # Pod的子网网段 configSyncPeriod: 15m0s conntrack: max: null maxPerCore: 32768 min: 131072 tcpCloseWaitTimeout: 1h0m0s tcpEstablishedTimeout: 24h0m0s enableProfiling: false healthzBindAddress: 0.0.0.0:10256 # 监控状态监测的地址与端口 hostnameOverride: "" iptables: masqueradeAll: false masqueradeBit: 14 minSyncPeriod: 0s syncPeriod: 30s ipvs: excludeCIDRs: null minSyncPeriod: 0s scheduler: "" syncPeriod: 30s kind: KubeProxyConfiguration metricsBindAddress: 127.0.0.1:10249 # 指标数据采集的地址与端口 mode: ipvs # 使用ipvs模块,不用留空""即可 nodePortAddresses: null oomScoreAdj: -999 portRange: ""

创建内核模块:载入相关的脚本文件/etc/sysconfig/modules/ipvs.modules,设定自动载入的内核模块

#!/bin/bash ipvs_mods_dir="/usr/lib/modules/$(uname -r)/kernel/net/netfilter/ipvs" for i in $(ls $ipvs_mods_dir | grep -o "^[^.]*"); do /sbin/modinfo -F filename $i &> /dev/null if [ $? -eq 0 ]; then /sbin/modprobe $i fi done

修改权限并执行:

chmod +x /etc/sysconfig/modules/ipvs.modules /etc/sysconfig/modules/ipvs.modules lsmod | grep ip_vs

在master上创建 kube-proxy.conf 配置文件:

kube-proxy的配置文件不是自动生成的,需要手动创建,方法与创建kubectl的配置相同。

使用的ca为k8s集群的ca。使用的证书为 kube-proxy.crt 和 kube-proxy.key。配置文件中使用的用户名为 system:kube-proxy 这是内置的用户名,且必须用此名称。

kubectl config --kubeconfig=kube-proxy.conf set-cluster kubernetes --server="https://k8s-master:6443" --certificate-authority=/etc/kubernetes/cert/ca.crt --embed-certs=true kubectl config --kubeconfig=kube-proxy.conf set-credentials system:kube-proxy --client-certificate=/etc/kubernetes/cert/kube-proxy.crt --client-key=/etc/kubernetes/cert/kube-proxy.key --embed-certs=true kubectl config --kubeconfig=kube-proxy.conf set-context system:kube-proxy@kubernetes --cluster=kubernetes --user=system:kube-proxy kubectl config --kubeconfig=kube-proxy.conf use-context system:kube-proxy@kubernetes

修改权限:

chmod 644 kube-proxy.conf

复制 kube-proxy.conf 到node节点:

scp kube-proxy.conf root@k8s-node01:/etc/kubernetes/

启动kube-proxy:

systemctl start kube-proxy.service systemctl enable kube-proxy.service

部署网络插件flannel:

kubectl apply -f https://raw.githubusercontent.com/coreos/flannel/master/Documentation/kube-flannel.yml

k8s高可用

k8s-master02节点:下面列出的证书是几个master节点共用的证书,也就是说集群的ca是同一个,其他master节点的证书需要重新创建,因为放在了不同节点上所以名称可以相同,直接使用上面的命令创建即可。

ca.crt ca.key front-proxy-ca.crt front-proxy-ca.key front-proxy-client.crt front-proxy-client.key

查看endpoints:

kubectl get endpoints NAME ENDPOINTS AGE kubernetes 192.168.1.50:6443,192.168.1.51:6443 21h

测试高可用:

查看当前的endpoints:

kubectl get endpoints -n kube-system kube-controller-manager -o yaml | grep k8s-master

停掉这个节点的controller-manager服务:

systemctl stop kube-controller-manager.service

再次查看endpoints:如果发现有变化则说明高可用是可用的

kubectl get endpoints -n kube-system kube-controller-manager -o yaml | grep k8s-master

使用nginx进行四层转发:nginx版本要在1.9之后才能使用四层代理,1.9之前的要手动编译。

user nginx;

worker_processes auto;

error_log /var/log/nginx/error.log;

pid /run/nginx.pid;

include /usr/share/nginx/modules/*.conf;

events {

worker_connections 1024;

}

stream {

upstream backend {

hash $remote_addr consistent; # 一致性hash

server 192.168.1.50:6443 weight=5 max_fails=3 fail_timeout=60s;

server 192.168.1.51:6443 weight=5 max_fails=3 fail_timeout=60s;

}

server {

listen 6443;

proxy_connect_timeout 1s;

proxy_timeout 10s;

proxy_pass backend;

}

}安装coredns

官网地址:

https://github.com/coredns/deployment/tree/master/kubernetes

下载资源并安装:

wget https://raw.githubusercontent.com/coredns/deployment/master/kubernetes/coredns.yaml.sed wget https://raw.githubusercontent.com/coredns/deployment/master/kubernetes/deploy.sh bash deploy.sh -i 10.96.0.10 -r "10.96.0.0/12" -s -t coredns.yaml.sed | kubectl apply -f -

查看状态是否为READY:

kubectl get pods -n kube-system

解析测试:

~]# kubectl run busybox --image=busybox:1.28 --generator="run-pod/v1" -it --rm -- sh / # nslookup kube-dns.kube-system Server: 10.96.0.10 Address 1: 10.96.0.10 kube-dns.kube-system.svc.cluster.local Name: kube-dns.kube-system Address 1: 10.96.0.10 kube-dns.kube-system.svc.cluster.local